Hand Actions and the Brain

About Dr. Jody Culham

Jody Culham is a Professor in the Department of Psychology and Neuroscience Program at Western University.

Dr. Culham’s lab uses cognitive neuroscience approaches to investigate how the human brain uses sensory information to perceive the world and guide hand actions such as reaching, grasping and tool use.

She was one of the first to use brain imaging techniques to discover and characterize human brain areas involved in hand actions. To do so, her lab developed novel techniques to present real objects in the brain scanner and have research participants perform real hand actions upon them.

This emphasis on using real objects and real actions has been an ongoing theme in her lab. One line of recent research is comparing how the brain reacts to real objects vs. photographs that are matched for features like size and viewpoint. This work suggests that, although photos are commonly used as a proxy for the objects they represent, the brain does not process them in the same way. Another line of recent research has participants performing real tool use in the brain scanner.

The lab uses a variety of cognitive neuroscience techniques including functional magnetic resonance imaging (fMRI), behavioral studies, neurostimulation, and occasional testing of unique neuropsychological patients with interesting patterns of behavior.

Study Results

One recent study conducted in Dr. Culham’s lab by former PhD student, Jason Gallivan, investigated how the human brain plans actions with tools compared to actions with the hand (Gallivan et al., 2013) Past fMRI research from the Culham Lab and others had discovered numerous human brain regions activated when subjects perform actions like reaching out to touch an object or grasp it. Other research had discovered human brain regions involved in perceiving and thinking about tools. But almost no prior research had actually studied the brain areas involved in actually using a tool. The researchers wondered what would happen when subjects used a real tool to grasp or reach and touch an object? Would brain areas that plan and execute hand actions also be involved in tool actions?

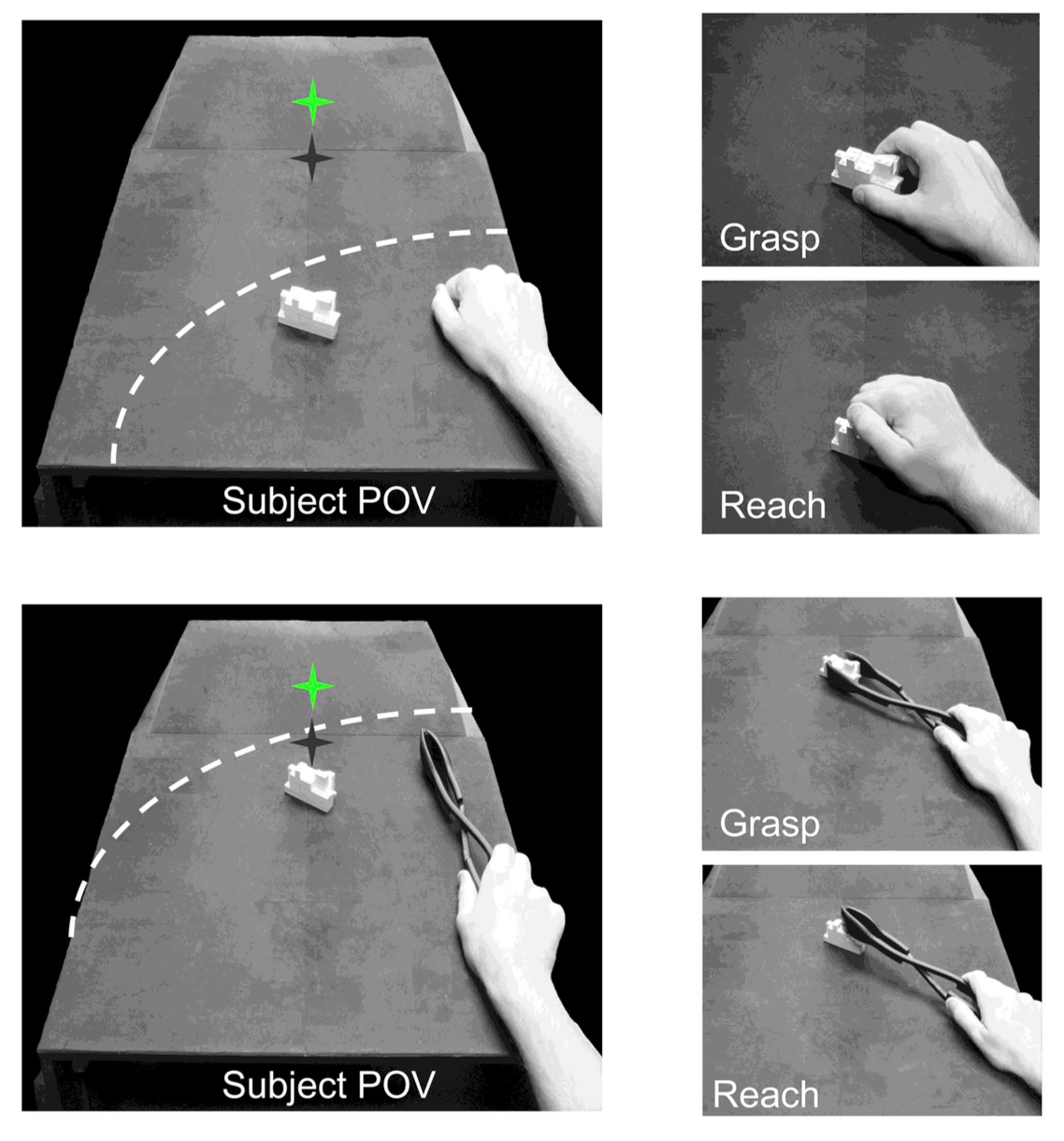

Human participants had their brain activity scanned using functional magnetic resonance imaging (fMRI) as they reached towards or grasped an object using either their hand or a set of plastic tongs. The researchers used “brain reading” techniques to see whether patterns of brain activation seconds before the actions began could be used to predict which action the participant intended to perform and whether these patterns were similar when the tool and hand were used.

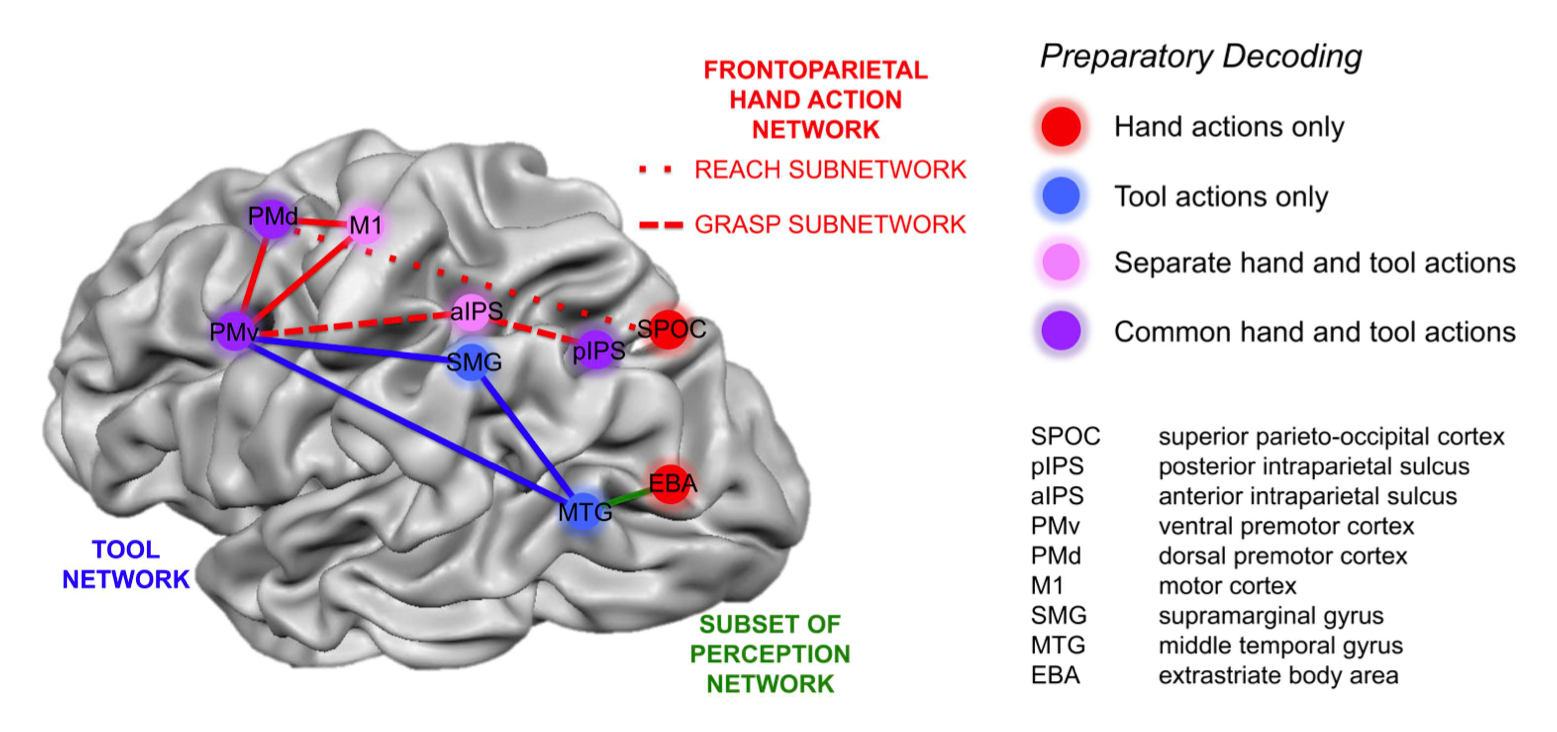

In the seconds before the hand began moving, many brain regions contained useful information about which action was going to be performed. Some regions only coded the upcoming action only when it involved the hand alone. Other regions coded the upcoming action only when it involved the tool. Most interestingly, several regions in the parietal and frontal cortex coded the upcoming action regardless of whether it involved the hand or the tool. This study provides evidence that tool use relies both on areas that evolved to control actions with the hand alone and evolutionarily newer brain areas involved in understanding and using tools.

This study was part of a broader line of research looking at the types of information about hand actions that are processed in different human brain regions. This research may help to inform the development of human brain-machine interfaces, in which electrodes implanted in the brain are used to control prosthetic limbs in patients with motor impairments (such as quadriplegics).

Reference

Gallivan, J. P., McLean, D. A., Valyear, K. F., & Culham, J. C. (2013). Decoding the neural mechanisms of human tool use. eLife, 2, e00424. http://dx.doi.org/10.7554/eLife.00425